Entidades

Ver todas las entidadesClasificaciones de la Taxonomía CSETv0

Detalles de la TaxonomíaProblem Nature

Specification, Robustness

Physical System

Vehicle/mobile robot

Level of Autonomy

High

Nature of End User

Amateur

Public Sector Deployment

No

Data Inputs

360 Ultrasonic Sonar, Image Recognition Camera, Long Range Radar, traffic patterns

Clasificaciones de la Taxonomía CSETv1

Detalles de la TaxonomíaIncident Number

52

Estimated Date

No

Lives Lost

0

Injuries

0

Estimated Harm Quantities

No

There is a potentially identifiable specific entity that experienced the harm

No

Risk Subdomain

5.1. Overreliance and unsafe use

Risk Domain

- Human-Computer Interaction

Entity

Human

Timing

Post-deployment

Intent

Unintentional

Informes del Incidente

Cronología de Informes

Tesla Motors emitió un comunicado el 30 de junio después de que la Administración Nacional de Seguridad del Tráfico en las Carreteras (NHTSA) abriera una investigación sobre un accidente fatal que involucró a un Model S conducido por el pil…

Tesla señaló que los conductores deben habilitar manualmente el sistema de piloto automático: "El piloto automático está mejorando todo el tiempo, pero no es perfecto y aún requiere que el conductor permanezca alerta".

Cuando la NHTSA abrió…

CANTON, Ohio — A Joshua Brown le encantaba tanto su Tesla Model S totalmente eléctrico que lo apodó Tessy. Y celebró la función de piloto automático que le permitió navegar por las carreteras, haciendo videos de YouTube de sí mismo conducie…

Joshua Brown, el hombre de 40 años que murió en un accidente mientras usaba el sistema de piloto automático de su Tesla Model S, estaba viendo una película de Harry Potter cuando su auto chocó con un semirremolque. Eso es según Frank Baress…

Joshua D. Brown, la primera persona en morir en un accidente de piloto automático de Tesla, estaba viendo una película de "Harry Potter" cuando su vehículo chocó con un camión con remolque, según el camionero sobreviviente.

“Todavía estaba …

Según los informes, EL ex Navy Seal que se convirtió en la primera persona en morir en un accidente automovilístico estaba viendo una película de Harry Potter cuando su vehículo Tesla se estrelló contra un camión.

Joshua D. Brown, de 40 año…

ARCHIVO - En esta foto de archivo del lunes 25 de abril de 2016, un hombre sentado detrás del volante de un automóvil eléctrico Tesla Model S en exhibición en la Exposición Automotriz Internacional de Beijing en Beijing. Las autoridades fed…

WASHINGTON — Un hombre que supuestamente estaba viendo una película de Harry Potter en un Tesla en “piloto automático” murió cuando el auto se estrelló contra un remolque el 7 de mayo, dijo un testigo, en lo que el gobierno de EE. UU. dice …

AutoGuide.com

Según los informes, el hombre de 40 años que murió recientemente en un accidente del piloto automático de Tesla estaba viendo Harry Potter.

Ayer, Tesla publicó una publicación de blog sobre la primera muerte de un conductor mi…

Correo electrónico de noticias ES Los últimos titulares en su bandeja de entrada Correo electrónico de noticias ES Los últimos titulares en su bandeja de entrada Ingrese su dirección de correo electrónico Continuar Ingrese una dirección de …

El ex SEAL de la Marina de los EE. UU. que se cree que es la primera persona en morir al volante de un automóvil autónomo, había recibido ocho multas por exceso de velocidad en los últimos seis años. También se informó que Joshua Brown pudo…

Joshua D. Brown, la primera persona en morir en un accidente con el piloto automático de un Tesla, estaba viendo una película de "Harry Potter" cuando el vehículo chocó con un camión con remolque, según el camionero sobreviviente.

"Todavía …

CERRAR Se inició una investigación preliminar por un accidente automovilístico fatal que involucró a un Tesla Model S. Según la Administración Nacional de Seguridad del Tráfico en las Carreteras, el sedán modelo eléctrico tenía activado el …

El conductor del primer accidente automovilístico fatal conocido también conducía tan rápido que "pasó tan rápido por mi remolque que no lo vi", dijo el conductor del camión involucrado.

Este artículo tiene más de 2 años.

Este artículo tien…

Joshua Brown, de 40 años, tenía un reproductor de DVD dentro de Tesla en el momento del accidente, lo que significa que podría haber estado viendo una película de Harry Potter a pesar de las afirmaciones anteriores de la empresa de automóvi…

El jueves, la Administración Nacional de Seguridad del Tráfico en las Carreteras (NHTSA) abrió una investigación preliminar para determinar si un defecto en la función de piloto automático de Tesla causó la muerte de Josh Brown, de 40 años,…

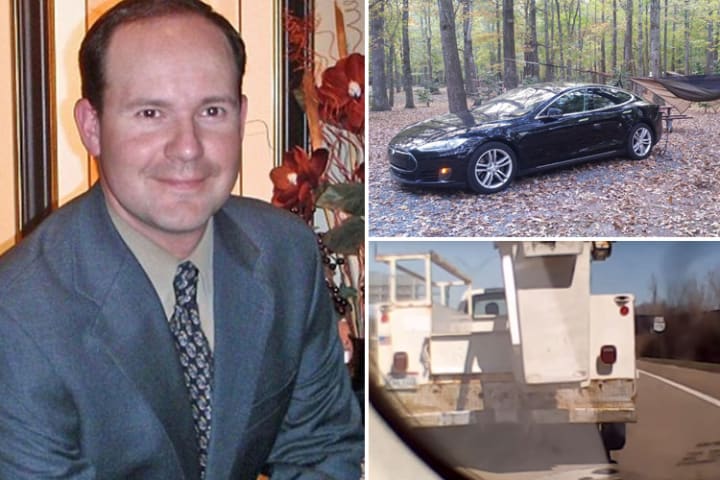

Una imagen fija de un video de YouTube que muestra a Joshua Brown en el asiento del conductor de su Tesla Model S sin manos en el volante mientras demuestra el modo de conducción autónoma del automóvil. Foto: AP

La pantalla táctil en el tablero del Model S no reproduce video, pero la víctima de un accidente fatal tenía un reproductor de DVD portátil en su automóvil.

Robyn Beck/AFP/Getty Images

Joshua Brown, de 40 años, creía en el poder de la ingen…

Accidente de Tesla: se encuentra un reproductor de DVD entre los restos del automóvil, en medio de informes que el conductor estaba viendo Harry Potter

Actualizado

Se encontró un reproductor de DVD en el automóvil Tesla que estaba en piloto…

El conductor de Tesla que murió en una colisión con un camión el 7 de mayo podría haber estado viendo una película de Harry Potter en el momento del accidente mientras el automóvil estaba en modo de piloto automático, según un testigo. Josh…

El hombre que murió en el primer accidente fatal conocido de un automóvil autónomo pudo haber estado viendo una de las películas de Harry Potter cuando ocurrió la colisión.

Según el conductor del camión contra el que chocó el auto de Joshua…

Un policía de la Patrulla de Caminos de Florida en la escena dijo que se estaba mostrando una película de Harry Potter en el reproductor de DVD. Otro testigo, Terence Mulligan, dijo que llegó al lugar antes que el primer policía estatal de …

El propietario de Tesla que murió mientras estaba dentro de su automóvil autónomo estaba viendo una película de Harry Potter en ese momento, según un testigo.

Robert VanKavelaar le dijo a Inside Edition que el automóvil destrozado de Joshua…

Hay un nuevo detalle pequeño pero importante en la investigación del fatal accidente del Tesla Model S mientras el sistema de piloto automático estaba activado. Ahora nos enteramos de que la Patrulla de Carreteras de Florida confirmó que se…

Un testigo que fue una de las primeras personas en acercarse al lugar del accidente luego de un accidente fatal de Tesla en Florida el año pasado, dijo a los investigadores federales que no vio ni escuchó un video en el automóvil momentos d…

Los investigadores estadounidenses de accidentes han abierto sus archivos sobre una colisión fatal en la autopista entre un Tesla Model S y un camión, lo que confirma la declaración anterior de Tesla de que su piloto automático no notó que …

El tercio superior de un Tesla Model S se cortó en una colisión fatal con un camión con remolque en Florida el año pasado. Robert VanKavelaar/Folleto vía Reuters

En mayo de 2016, sucedió lo inevitable: un conductor de Tesla murió en un acci…

En lo que resultó ser un caso de morbosa ironía, anoche informamos que Josh Brown, el (no) conductor de 40 años del Tesla que se estrelló fatalmente contra un camión el 7 de mayo en Florida mientras estaba en modo de conducción autónoma cua…

El 7 de mayo de 2016, un hombre de 40 años llamado Joshua Brown murió cuando su sedán Tesla Model S chocó con un camión con remolque que se cruzaba en su camino en la autopista US 27A, cerca de Williston, Florida. Casi tres años después, ot…

Variantes

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents

Incidentes Similares

Did our AI mess up? Flag the unrelated incidents