Description: NewsGuard has identified 17 TikTok accounts that have been using AI-generated voices to advance and amplify conspiracy theories and false claims beginning in June 2023. By September 25, 2023, these accounts had amassed over 336 million views and over 14.5 million likes. Videos include baseless claims involving public figures such as Barack Obama, Oprah Winfrey, and Jamie Foxx.

Entities

View all entitiesAlleged: ElevenLabs developed an AI system deployed by TikTok user @e.news.tv , TikTok user @d.news.tv , TikTok user @drphilshowtv , TikTok user @ynewstv2023 and TikTok users, which harmed Barack Obama , Oprah Winfrey , Jamie Foxx , Joan Rivers , Phil McGraw , Yahoo! News , E! News , TikTok and General public.

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

3.1. False or misleading information

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Misinformation

Entity

Which, if any, entity is presented as the main cause of the risk

Human

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Intentional

Incident Reports

Reports Timeline

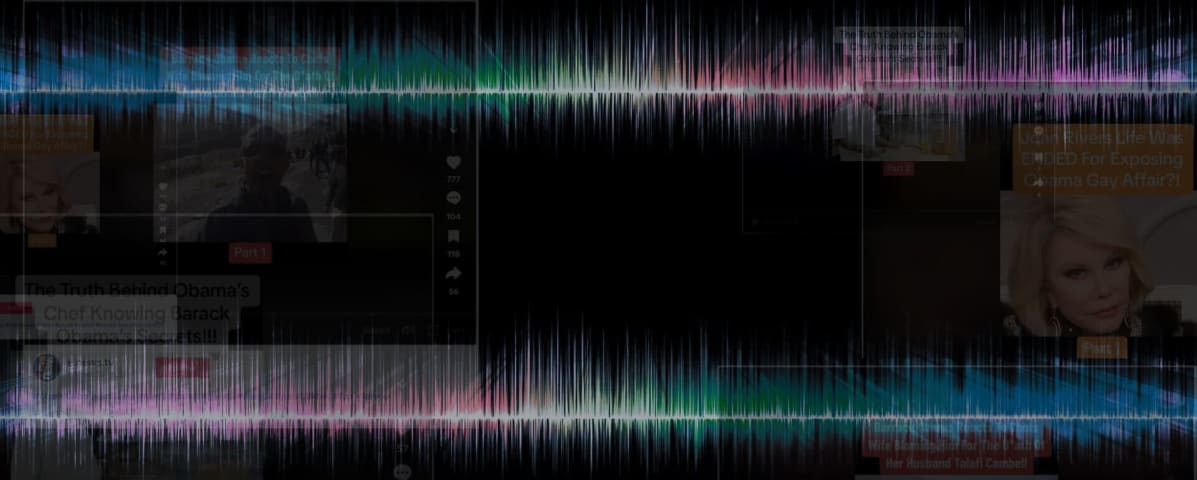

A network of 17 TikTok accounts appears to be leveraging hyper-realistic audio AI voice technology to gain hundreds of millions of views on conspiracy content that sounds authentically human, NewsGuard has found. The accounts seem to be usi…

In a slickly produced TikTok video, former President Barack Obama — or a voice eerily like his — can be heard defending himself against an explosive new conspiracy theory about the sudden death of his former chef.

“While I cannot comprehend…

Variants

A "variant" is an incident that shares the same causative factors, produces similar harms, and involves the same intelligent systems as a known AI incident. Rather than index variants as entirely separate incidents, we list variations of incidents under the first similar incident submitted to the database. Unlike other submission types to the incident database, variants are not required to have reporting in evidence external to the Incident Database. Learn more from the research paper.

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Deepfake Obama Introduction of Deepfakes

· 29 reports

Wikipedia Vandalism Prevention Bot Loop

· 6 reports

Similar Incidents

Did our AI mess up? Flag the unrelated incidents

Deepfake Obama Introduction of Deepfakes

· 29 reports

Wikipedia Vandalism Prevention Bot Loop

· 6 reports