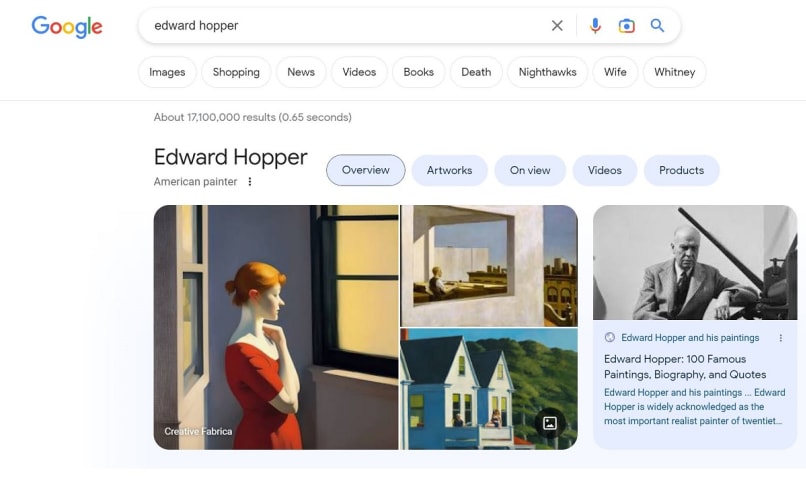

Description: Google's knowledge panel for the American artist Edward Hopper featured an AI-generated image which was purportedly created in the artist's style but was not one of his works, the image of which was removed soon after.

Entities

View all entitiesAlleged: Google developed and deployed an AI system, which harmed Google users.

Incident Stats

Risk Subdomain

A further 23 subdomains create an accessible and understandable classification of hazards and harms associated with AI

3.1. False or misleading information

Risk Domain

The Domain Taxonomy of AI Risks classifies risks into seven AI risk domains: (1) Discrimination & toxicity, (2) Privacy & security, (3) Misinformation, (4) Malicious actors & misuse, (5) Human-computer interaction, (6) Socioeconomic & environmental harms, and (7) AI system safety, failures & limitations.

- Misinformation

Entity

Which, if any, entity is presented as the main cause of the risk

AI

Timing

The stage in the AI lifecycle at which the risk is presented as occurring

Post-deployment

Intent

Whether the risk is presented as occurring as an expected or unexpected outcome from pursuing a goal

Unintentional

Incident Reports

Reports Timeline

If you don't think visual AI is a problem, this is what comes up when you @Google Edward Hopper.

AI is already rewriting art history.

When you Googled the famed realist artist Edward Hopper this week, the first featured image that appeared was convincing enough. A lone woman, painted in the artist's signature soft, muted style, stared …

Variants

A "variant" is an incident that shares the same causative factors, produces similar harms, and involves the same intelligent systems as a known AI incident. Rather than index variants as entirely separate incidents, we list variations of incidents under the first similar incident submitted to the database. Unlike other submission types to the incident database, variants are not required to have reporting in evidence external to the Incident Database. Learn more from the research paper.